These systems must link front-end UX signals to backend fulfilment and data pipelines so that optimizations manifest as revenue rather than opinions.

Focus on modular data architecture, deterministic telemetry, and governance for any automation layer you deploy; without those, gains from automation and personalization will be inconsistent.

Design decisions must prioritize measurable conversion outcomes over aesthetic hypotheses. Each change should map to an experiment, a KPI, and a rollback plan.

Why eCommerce Best Practices Change Every Year

The cadence of change is driven by three vectors: platform compute and AI capability, consumer device patterns, and regulatory shifts. Each vector shifts what is feasible and what customers tolerate, so a static “best practice” becomes obsolete within months in high-growth sectors.

Adoption of agentic AI architectures has accelerated the rate at which new patterns appear, because these systems enable actions (not just suggestions) that alter customer journeys in real time. Operational readiness and data quality therefore determine who benefits and who is disrupted.

UX and Design Best Practices

UX must be treatable as software: instrumented, iterated, and aligned to conversion metrics. This means design tokens, component libraries, and analytics schemas that persist across releases and A/B tests.

Mobile-First Design

Mobile-first remains non-negotiable for the primary UX surface in 2026 because user sessions and conversion funnels increasingly begin on small screens. Design patterns should prioritise progressive disclosure, touch affordances, and critical-path minimization.

A mobile-first workflow requires early performance budgets and continuous usability testing on representative devices. That discipline reduces rework and prevents desktop-tailored decisions from degrading small-screen outcomes.

Clear Navigation and Search

Navigation and search are the operational heart of product discovery; ambiguity here imposes cognitive tax that kills conversion. Implement predictive search with relevance signals derived from behavioral telemetry and catalog analytics.

Search must integrate with personalization signals to reduce false positives and present the smallest set of high-probability SKUs first. Evaluate search effectiveness through CTR-to-cart and time-to-add metrics.

Performance and Speed Optimization

Page speed directly correlates with conversion and revenue; improvements in core web vitals consistently show measurable lift in engagement and transactions. Instrument load paths and prioritize Time-to-Interactive and Largest Contentful Paint as primary KPIs.

A recommended initial triage is to audit resource waterfall, eliminate third-party blocking, and enforce server-side compression and caching strategies. For Shopify stores specifically, platform-level optimizations and edge caching reduce origin load and improve global TTI; see our Shopify speed optimization service for implementation details.

| Area | Typical Impact | Quick Remediation |

| Third-party scripts | High latency, layout shift | Async/defer, load on interaction |

| Unoptimized images | Increased LCP | Serve AVIF/WebP, responsive srcset |

| Large JS bundles | Slow TTI | Code-splitting, tree-shaking |

| No CDN / poor cache | Geographic latency | Deploy CDN, set cache headers |

This table maps common failure modes to remediation patterns so engineers and product owners can prioritise work. Use it as a checklist within sprint planning and pair it with real user monitoring to avoid regressions.

Checkout and Payment Optimization

Checkout optimization is a systems problem that spans frontend UX, payment orchestration, fraud rules, and customer communications. Reduce friction by treating checkout as a mini-application with its own feature flagging and observability.

Implement these deliberate steps to reduce abandonment and recover revenue:

- Simplify form fields to only required inputs and support autofill standards.

- Offer local, mobile-friendly payment methods and network tokenization to reduce cart friction.

- Provide clear progress indicators and one-click flows for returning customers.

Integrate payment analytics into your revenue model and instrument drop-off points to generate actionable RCA tickets. Each bullet above should map to an experiment with a clear expected delta in conversion.

Personalization and Automation

Personalization in 2026 is multichannel, multimodal, and governed; indiscriminate personalization increases churn when it leverages stale or inconsistent data. Build personalization on a canonical customer profile and use confidence thresholds before exposing automated recommendations on high-value pages.

Practical implementation includes offline model retraining cadence, realtime feature serving for session signals, and fallback deterministic rules when model confidence is low. For engineering teams this means separate pipelines for feature computation, model training, and serving, with A/B controls around each.

- Prioritise high-impact touchpoints (homepage, product pages, cart) for model-driven content.

- Maintain deterministic fallbacks to preserve UX when models are unavailable.

Close the discussion by noting that personalization must be auditable: log inputs, decisions, and outcomes so you can diagnose bias, drift, and revenue impact.

Security and Trust Signals

Security posts are not optional content; they are conversion enablers when implemented visibly and correctly. Consumers must see and trust the mechanisms that protect payment and personal data, and engineers must ship minimal-privilege designs and tokenization.

| Trust Signal | Implementation Guidance | Why it Converts |

| PCI-compliant payment flow | Use hosted or tokenized checkout | Reduces perceived risk at payment |

| Visible data minimization notice | Inline, contextual explanation | Builds trust without compliance copy |

| Real-time fraud feedback | Adaptive risk scoring with manual override | Lowers false declines and preserves revenue |

Maintain a cadence of security reviews and tabletop exercises that include product, engineering, and legal stakeholders. Trust signals are worthless if they are not paired with operational readiness and rapid incident response.

Research Narration and Applied Evidence

Recent arXiv research demonstrates the efficacy of multimodal recommender systems and agentic conversational agents for eCommerce when they are integrated with strong data governance and latency-aware serving. These systems can raise engagement and CVR but require robust evaluation frameworks before production rollout.

Mobile usability studies also show a shifting landscape of interaction modalities, which validates the imperative for mobile-first patterns and accessible, AI-assisted interactions on small screens. Use these studies to set benchmarks for usability testing and to justify technical investment in adaptive interfaces.

Final Implementation Roadmap

Begin with an audit that maps UX journeys to business KPIs and telemetry. This audit should include a dependency graph of third-party services, critical resource waterfalls, and a prioritised backlog for measurable wins. Use that roadmap to scope short sprints that deliver both technical and product outcomes.

For platform-specific speed work, reference and integrate Shopify-level optimizations such as edge CDN rules and theme bundle splitting; our Shopify speed optimization offering can accelerate this work.

For broader architecture and platform builds, align your backlog with our eCommerce development standards and implementation templates to reduce integration risk.

If you need a practical implementation roadmap, an audit that maps engineering work to conversion lift, or execution support for personalization and checkout orchestration, contact Stellar Soft and request a technical assessment. We will produce a prioritized sprint plan that converts diagnostic findings into measurable revenue impact.

FAQs

What are the best eCommerce practices in 2026?

The best practices in 2026 are those that combine performance, governed personalization, and resilient checkout experiences into a single observable pipeline. They require modular data architecture, continuous measurement, and conservative rollout patterns for automated agents.

How to optimize an online store for conversions?

Target low-hanging technical debt first: reduce page load times, fix critical UX friction in the checkout path, and instrument user journeys to create testable hypotheses. Then apply model-driven personalization to high-impact touchpoints while continuously validating with experiments.

What UX elements impact eCommerce sales most?

Primary elements include page load performance, clarity of product information, trust signals in the checkout, and the relevance of search/recommendations. Combined, these elements determine the friction and the confidence a shopper has before committing to purchase.

Leading platforms now integrate fulfillment workflows with order management systems (OMS) and advanced forecasting models. Operational efficiency is driven by strict latency SLAs for both warehousing processes and last‑mile delivery coordination.

Order fulfillment eCommerce now requires end‑to‑end orchestration between digital storefronts, fulfillment centers, and carrier networks. Distributed inventory strategies optimize proximity to demand clusters while minimizing total delivery distance. This architectural shift reduces average delivery time and total logistics cost, influencing customer satisfaction scores.

In 2026, marketplace platforms incorporate fulfillment telemetry into performance KPIs for third‑party logistics (3PL) providers. These KPIs include pick rate, ship time variance, and delivery success rate for time‑critical segments. Enterprises that align fulfillment metrics with customer retention goals gain measurable competitive advantage.

Each of the components above, inventory location, carrier integration, and performance telemetry, must be engineered with precise protocol interfaces and robust fault tolerance. Integration protocols such as RESTful APIs, event streams (Kafka), and EDI still operate side‑by‑side in hybrid enterprise stacks. This system complexity requires disciplined architectural governance to maintain throughput as order volume scales.

What Is eCommerce Fulfillment?

eCommerce fulfillment refers to the technical and operational processes that begin when an order is placed and end when the parcel is delivered to the customer. It includes inventory receipt, storage, pick/pack operations, shipment processing, and returns handling.

At scale, fulfillment becomes less of a warehouse task and more of a systems engineering challenge. It requires reliable digital infrastructure, robust integrations between platforms, and well-designed workflows – often implemented through custom software development and tailored automation solutions.

Fulfillment is both a logistical and data problem: systems must synchronize physical actions with digital state updates in real time. Orders flow from eCommerce platforms into warehouse systems, shipping carriers, ERP environments, and customer notification services. Achieving this level of coordination typically requires structured system integration services that connect APIs, event streams, and databases into a unified operational pipeline.

In practice, successful eCommerce fulfillment depends on reliable warehouse management systems (WMS) that enforce location control, inventory accuracy, and task allocation. Modern WMS solutions integrate barcode scanning, voice picking, and automated guided vehicles (AGVs) to increase throughput and reduce errors. Building or customizing such systems often involves enterprise software engineering, backend architecture design, and scalable cloud deployment.

Beyond task execution, fulfillment platforms generate large volumes of operational data. Telemetry from picking speed, inventory movement, and delivery timelines feeds analytics dashboards and forecasting models. Organizations frequently rely on data engineering and analytics solutions to process these datasets, ensuring real-time performance monitoring and predictive workload balancing.

Advanced fulfillment networks also implement intelligent routing, dynamic batching, and demand forecasting. These capabilities are typically supported by AI and machine learning development, which enables more accurate demand prediction, anomaly detection, and route optimization.

Fulfillment must satisfy customer expectations for delivery speed and accuracy while minimizing operational costs. Customers increasingly expect same-day or next-day delivery, putting pressure on fulfillment networks to optimize routing, labor deployment, and distributed inventory placement. Supporting this level of responsiveness requires scalable cloud infrastructure and DevOps practices to maintain system reliability under peak loads.

As fulfillment complexity grows, companies invest in micro-fulfillment centers, autonomous sorting technologies, and real-time orchestration platforms. These environments require not only operational efficiency but also resilient architecture, secure data exchange, and continuous system monitoring – areas where structured engineering and integration expertise become critical.

Fulfillment Models Explained

There are multiple fulfillment models employed in eCommerce logistics, each with distinct architectural, cost, and operational properties. These models include in‑house warehousing, dropshipping fulfillment, hybrid fulfillment, and 3PL eCommerce outsourcing. Each model requires different integration patterns with order management systems and varying degrees of operational control.

In‑House fulfillment centralizes physical inventory in company‑owned facilities. It offers maximum control over order flow, quality assurance, and process optimization. However, it also demands capital investment in infrastructure and labor management systems.

Dropshipping fulfillment eliminates the need to hold inventory by having suppliers fulfill directly to customers. It reduces inventory risk but increases dependency on supplier performance and complicates delivery visibility. Dropshipping also requires robust exception handling and notification systems due to variable supplier SLAs.

3PL eCommerce outsourcing delegates fulfillment operations to specialized logistics partners. These partners typically provide multi‑site warehousing, pick/pack services, and carrier negotiation. Integration with 3PLs demands secure API endpoints, real‑time status callbacks, and standardized data interchange protocols.

Hybrid fulfillment combines internal resources with 3PL partnerships to balance scale and control. Hybrid approaches often retain strategic SKUs in company facilities while outsourcing long‑tail SKUs to 3PL partners. This model simplifies seasonal demand scaling without large fixed infrastructure costs.

Below is a table that compares these models across key operational dimensions relevant to fulfillment decision-making.

| Fulfillment Model | Inventory Control | Integration Complexity | Operational Cost Profile |

| In‑House | High | Medium | High fixed, low variable |

| Dropshipping Fulfillment | Low | High | Low fixed, moderate variable |

| 3PL eCommerce | Medium | High | Low fixed, moderate variable |

| Hybrid Fulfillment | Medium‑High | Very High | Mixed cost structure |

Each model must be evaluated against corporate objectives and throughput targets. Technical teams must architect fulfillment integrations that allow switching or scaling of models over time.

Costs of eCommerce Fulfillment

Understanding the costs of eCommerce fulfillment is critical for financial planning and pricing strategy. Fulfillment costs include warehousing, labor, packaging, transportation, returns processing, and technology licensing. Each cost category contributes differently to the total cost of goods sold (COGS) and margin erosion.

Warehousing costs encompass facility lease, utilities, rack space, and equipment depreciation. In distributed inventory strategies, warehousing costs scale with the number of fulfillment centers and proximity to demand clusters. These costs are often measured in $/ft²/month and vary widely depending on geographic location and labor market conditions.

Labor costs include pick, pack, and sort operations, often priced per pick or per hour. Advanced automation such as robotics reduces per‑unit labor cost but introduces capital expenditure and maintenance overhead. Labor scheduling systems must optimize resource allocation to reduce idle time and peak overload.

Packaging and materials costs are line‑item charges for boxes, cushioning, labels, and protective materials. Materials cost influences both unit economics and sustainability metrics. Optimization engines that recommend packaging size per SKU can reduce volumetric weight charges from carriers.

Transportation and shipping costs dominate total logistics expenses for many enterprises. These costs include carrier charges, fuel surcharges, and last‑mile delivery fees. Negotiated carrier rates and zone‑based charge optimization directly influence net shipping cost per order.

Returns and reverse logistics costs cover inspection, repackaging, restocking, and potential disposal or refurbishing of returned goods. Systems must classify returns based on condition and destination, triggering appropriate reverse workflows. Metrics such as return rate and average cost per return are key inputs to fulfillment cost models.

Below is a table that itemizes fulfillment cost categories and technical considerations.

| Cost Category | Key Technical Considerations | Impact on Unit Economics |

| Warehousing | Facility management systems, inventory accuracy | Increases fixed and variable cost |

| Labor | Workforce management software, automation | High variable cost without automation |

| Packaging | Package optimization algorithms, environmental compliance | Moderate cost, impacts weight charges |

| Transportation | Carrier APIs, route optimization engines | Major variable cost |

| Returns | Reverse logistics workflows, condition classification | Erodes margin if unmanaged |

Fulfillment cost analysis must inform pricing, promotion, and network design strategies. Engineers and operations planners should build cost simulation models that integrate these categories to forecast margin impact under varying demand scenarios.

Fulfillment Trends in 2026

In 2026, fulfillment trends emphasize automation, predictive analytics, and distributed logistics. Micro‑fulfillment centers (MFCs) located within urban zones reduce delivery time and transport cost. They operate with automated sortation and robotics systems that handle SKU velocity tiers efficiently.

Another trend is predictive workload balancing, which uses machine learning to forecast demand at SKU and node levels. Models trained on time‑series data and external signals like weather and macroeconomic indicators improve labor and inventory allocation. This predictive approach aligns with research on time‑series predictions in supply chain contexts.

Sustainability optimization is rising as a fulfillment KPI. Algorithms now recommend carrier selection based on both cost and carbon footprint, balancing delivery windows with sustainability goals. This trend influences packaging decisions and inventory location strategies.

Real‑time visibility across the fulfillment pipeline is now a baseline requirement. Customers expect order status updates with precise location and delivery time estimates. Fulfillment systems integrate telemetry from warehouse sensors, vehicle GPS, and carrier tracking APIs to generate a unified tracking view.

Collaborative fulfillment networks between retailers and 3PL partners improve capacity utilization. Shared warehousing and fulfillment pooling reduce idle infrastructure cost. These arrangements require standardized integration protocols and shared performance metrics.

The final bullet list identifies emerging technical capabilities shaping fulfillment workflows.

- Robotic process automation (RPA) for repetitive warehouse tasks, reducing manual error rates.

- Edge computing at fulfillment nodes to process telemetry locally and reduce latency.

The list above highlights key technical enablers that reduce cycle times and improve operational throughput. Closing this trends section, engineering organizations must evaluate these capabilities against cost, complexity, and ecosystem readiness.

Conclusion

In 2026, eCommerce fulfillment systems operate at the intersection of logistics, automation, and real‑time data orchestration. Fulfillment models and cost structures must align with business goals while satisfying customer delivery expectations. Technical leaders should prioritize architectural scalability, predictive intelligence, and performance telemetry to optimize fulfillment networks.

If your organization is evaluating eCommerce fulfillment strategies or needs expert guidance to implement integrated fulfillment systems with advanced automation, Stellar Soft is ready to assist. Our team specializes in architecting scalable fulfillment solutions tailored to enterprise requirements.

Contact Stellar Soft to optimize your eCommerce fulfillment infrastructure and reduce total cost of logistics while improving delivery performance.

FAQs

What is eCommerce fulfillment?

eCommerce fulfillment is the complete set of systems and processes that manage the lifecycle of an online order from purchase to delivery. It includes inventory handling, picking, packing, shipping, tracking, and reverse logistics mechanisms.

What fulfillment models exist?

Fulfillment models include in‑house warehousing, dropshipping fulfillment, 3PL eCommerce outsourcing, and hybrid fulfillment strategies. Each model has distinct integration requirements and cost profiles.

How much does fulfillment cost in 2026?

Fulfillment cost in 2026 consists of warehousing, labor, packaging, transportation, and returns processing components. Costs vary by geography, automation level, and carrier agreements; engineering teams should use analytical models to estimate total fulfillment cost accurately.

It utilizes real‑time data streams, behavioral signals, and AI models to refine eCommerce personalization far beyond traditional segmentation. This section articulates the operational benefits and concrete examples of systems that improve business outcomes.

The primary benefit of hyper‑personalization is measured uplift in conversion rates through more relevant content delivery. Data from operational systems shows that exposure to contextually relevant product recommendations increases click‑through rates and order values. For example, dynamic home‑page content that adapts to user history can drive 10-30% increases in key revenue metrics.

Hyper‑personalization also reduces customer churn by aligning product offers with observed preferences and predicted trajectories. An enterprise retailer using predictive models reported a 15% reduction in abandonment across high‑traffic segments within six months. These operational benefits are quantifiable and repeatable when properly implemented.

What Is Hyper-Personalization?

Hyper‑personalization is the real‑time adaptation of eCommerce experiences based on individual user data and context. Unlike basic eCommerce personalization, which uses static profiles or broad segments, hyper‑personalization applies algorithmic inference to produce individualized responses. It leverages multi‑modal data sources, transactional, behavioral, contextual, to refine the customer journey personalization continuously.

Technically, the core of hyper‑personalization is a real‑time decision engine that orchestrates inputs from recommendation systems, session analytics, and predictive models. These engines correlate patterns from previous interactions with probabilistic user models to select optimal content and offers. See foundational research on contextual bandits in web personalization, which offers insight into decision policies under uncertainty.

In practice, a hyper‑personalized eCommerce system might adjust messaging, prices, and product recommendations within milliseconds of a user action. This requires distributed data pipelines, low‑latency inference layers, and robust A/B validation frameworks. Hyper‑personalization is not a single tool but an integrated system of data capture, modeling, and delivery.

Difference Between Personalization and Hyper-Personalization

eCommerce personalization refers to tailoring user experiences based on aggregated category data and heuristic rules. It includes simple rule‑based content blocks such as “Customers like you also viewed” or “Top sellers in your region.” This form of personalization typically updates on a daily or session basis and does not incorporate real‑time contextual inference.

Hyper‑personalization, by contrast, uses AI personalization to model individual user states continuously and applies real‑time personalization to adjust interactions. It integrates machine learning predictions, behavioral vectors, and context awareness to produce granular experience adjustments. The result is highly specific content sequencing, which traditional eCommerce personalization cannot deliver due to architectural limitations.

Personalization generally operates on static segments defined by demographics or broad behavioral buckets. Hyper‑personalization uses dynamic user embeddings, predictive scoring, and decision‑level fusion across algorithms. This enables real‑time adaptation, such as recommending products based on the current session trajectory rather than historical profile alone.

| Dimension | eCommerce Personalization | Hyper‑Personalization |

| Data Usage | Batch historical data | Real‑time streams + historical context |

| Modeling Approach | Rule‑based, segmentation | ML inference, dynamic embeddings |

| Response Time | High latency updates | Millisecond‑level decisioning |

| Context Sensitivity | Low | High |

| Customer Journey Integration | Partial | Full, continuous process |

Hyper‑personalization requires specialized infrastructure and modeling expertise that differs significantly from traditional eCommerce personalization. Organizations must audit their data pipelines and processing latencies to qualify for true hyper‑personalization deployment. The distinctions above underscore why many enterprises treat these as separate stages in technical maturation.

Technologies Behind Hyper-Personalization

To enable hyper‑personalization, eCommerce platforms require a technical stack that supports real‑time data processing, predictive analytics, and agile deployment. At the core are streaming data frameworks like Apache Kafka and event tracking layers that capture every user interaction. These technologies replace periodic batch jobs with continuous ingestion, enabling up‑to‑the‑moment user state.

Machine learning models operationalize AI personalization by predicting next‑best actions and user intent. Models such as recurrent neural networks (RNNs), transformers, or deep factorization machines can encode sequential behavior and latent preferences. Research on sequential recommendation systems demonstrates performance gains from attention‑based architectures.

The inference layer must integrate with eCommerce APIs to serve personalized content without perceptible delay. Low‑latency model serving frameworks like TensorFlow Serving or ONNX Runtime are common in high‑throughput settings. These systems expose model outputs to frontend components, which then render the user interface dynamically.

Additional important technologies include:

- Session state stores that preserve context across multi‑page journeys.

- Feature stores that standardize and serve predictive variables to models.

Integration between these technologies must prioritize robustness and observability. Without proper monitoring and model validation, systems degrade and fail to deliver consistent personalized eCommerce experiences.

Real-World Use Cases

Real‑world use cases of hyper‑personalization span product discovery, dynamic pricing, and cart abandonment mitigation. Retailers implement AI personalization to reorder search results based on inferred purchase intent. This improves relevance and reduces search friction for complex catalogs.

In travel and hospitality eCommerce, systems adjust offers dynamically to match user context, such as travel dates, device type, and previous interactions. Hotels may surface specific room types based on predicted value maximization. Airlines apply real‑time personalization to ancillary offers, which increases attach rates significantly.

Subscription services leverage hyper‑personalization to adjust onboarding flows according to user behavior within the first session. Predictive churn models identify at‑risk users and trigger targeted incentives. These interventions improve long‑term engagement metrics and subscription renewal rates.

The bullet list below identifies notable benefits achieved in enterprise deployments:

- Reduced bounce rates through adaptive landing pages tailored to individual interests.

- Increased average order values via contextually relevant cross‑sell recommendations.

The list above demonstrates measurable gains from hyper‑personalized systems. Closing this subsection, these use cases illustrate the practical applications of advanced eCommerce personalization strategies in high‑performance digital commerce environments.

Final Thoughts

Hyper‑personalization is a proven advancement in eCommerce personalization strategies that materially improves customer engagement, conversion rates, and business outcomes. It requires a coordinated stack of real‑time data capture, predictive modeling, and decision orchestration technologies. When implemented with precision and adherence to data governance standards, hyper‑personalization transforms static eCommerce platforms into adaptive, intelligent systems.

For organizations committed to advancing their digital commerce capabilities, Stellar Soft offers enterprise‑grade solutions that implement scalable AI personalization, real‑time personalization engines, and strategic integration frameworks. Contact Stellar Soft to evaluate your current eCommerce stack and begin architecting a system capable of delivering truly personalized eCommerce experiences at scale.

Contact Stellar Soft today to optimize your eCommerce personalization strategy.

FAQs

What is hyper‑personalization?

Hyper‑personalization is a system‑level approach to customizing eCommerce interactions at the individual level using real‑time data, AI, and predictive modeling. It contrasts with rule‑based personalization by continuously adapting content and offers based on dynamic user state.

How does personalization improve conversions?

Personalization improves conversions by increasing the relevance of content and recommendations to the current user’s intent. Data‑driven matching between user preferences and product offers reduces friction and guides decision‑making, which elevates conversion probability. Empirical studies of eCommerce systems confirm that personalized product suggestions improve key performance indicators such as click‑through and purchase rates.

What tools enable hyper‑personalization?

Tools that enable hyper‑personalization include streaming data platforms (e.g., Apache Kafka), feature and model stores, machine learning frameworks (TensorFlow, PyTorch), and real‑time model serving infrastructures. Additionally, analytics platforms that support sessionization and behavioral segmentation are necessary to provide the contextual signals that drive individualized decisions.

Models trained on historical data may perform well in validation but diverge under live input distributions, leading to unpredictable errors and user harm. An audit validates assumptions and makes hidden risks visible before customers are exposed.

Organizations that skip audits typically discover failures through customer complaints or regulatory inquiries rather than controlled testing. The result is expensive remediation, lost trust, and operational disruption. Pre-launch audits convert guesswork into verifiable controls.

Common Reasons AI Products Fail in Production

A primary cause of failure is brittle assumptions about input data: sampling bias, label noise, or feature drift invalidate model behavior quickly. Models that do not tolerate minor shifts in input distributions will produce unreliable outputs when the environment changes. This is a systems problem, not only an algorithmic one.

Another frequent reason is insufficient observability and feedback loops. Teams release models without mechanisms to detect degradation, making silent failure likely. Lack of monitoring means that regressions are only visible after significant user impact.

Operational and engineering debt also drives failure. Machine learning systems accrue unique technical debt, entanglement between preprocessing, training, and production code, which increases maintenance cost and reduces agility. This phenomenon has been documented as a structural risk in production ML environments.

Human factors and organizational gaps compound technical faults: unclear ownership, missing runbooks, and absent incident escalation procedures turn recoverable anomalies into outages. Without defined roles and processes, small incidents become crises. Good governance prevents this escalation.

The Role of Quality Audits in AI Development

Quality audits serve as formal checkpoints that evaluate risk across data, models, and infrastructure. They codify acceptance criteria and verify that those criteria are met through reproducible tests and documentation. An audit enforces accountability across engineering, data science, product, and legal teams.

Audits also standardize documentation practices that accelerate problem diagnosis. Model cards and datasheets are examples of artifacts that capture intended use, evaluation results, and dataset provenance. These documents reduce misuse and inform decision makers about model limitations.

Below is a focused checklist of operational audit scopes that should be applied to any AI product before release.

- Data lineage and integrity: verify provenance, transformation steps, and checksum or schema validation across pipelines.

- Model validation and robustness: confirm cross-validation, calibration, adversarial testing, and out-of-distribution evaluation.

The checklist above is a minimal operational scope; each item should produce objective pass/fail signals and versioned artefacts. Closing this subsection, audits are only useful when their findings are actionable and assigned to owners.

Below is a second list that describes governance artifacts and processes every audit must check.

- Documentation artifacts: model cards, datasheets, training logs, and evaluation notebooks are present and versioned.

- Operational controls: runbooks, rollback procedures, monitoring thresholds, and incident SLAs are defined and tested.

Those governance items convert technical validation into operational readiness. Closing the governance subsection, missing artifacts often correlate with longer mean time to recovery after incidents.

Data & Model Validation Failures

Data validation failures are often silent and insidious because training datasets rarely reflect production variability comprehensively. Common manifestations include label drift, feature distribution mismatch, and missing upstream validations that allow corrupted inputs into training. Detecting these issues requires automated data-quality pipelines that compute distribution statistics, label stability, and schema drift continuously.

Model validation failures extend beyond conventional accuracy metrics and require scenario-based testing. Validate calibration, subgroup performance, and failure modes under perturbations and noisy inputs. Tools like model cards make these evaluations explicit and document performance across demographic and contextual slices.

Adversarial and stress testing must be part of validation to surface brittle decision boundaries. Inject synthetic perturbations, simulate downstream system failures, and test graceful degradation. If a model does not degrade predictably, it is not production-ready.

Security, Bias & Compliance Risks

AI products increase attack surface areas and regulatory exposure when security and compliance are not audited. Threat models must include model-specific attacks such as model inversion, membership inference, and prompt injection in generative systems. Absent mitigations, sensitive training signals and PII can be exfiltrated through model outputs or side channels.

Bias and fairness audits detect disparate impacts that standard metrics can hide. Dataset documentation practices such as datasheets and fairness audits provide quantitative measures of disparate error rates and disparate impact across protected groups. These practices are vital for both ethical and legal risk management.

Compliance validation must establish data retention policies, consent records, and auditable lineage for inferred attributes. Regulatory readiness requires mapping technical controls to legal obligations; without this mapping, an audit cannot certify launch readiness. Closing this subsection, security and compliance gaps are immediate blockers for production deployment.

How AI Audits Improve Product Reliability

Audits materially increase reliability by converting vague risk statements into reproducible checks, remediation plans, and monitoring commitments. They force teams to codify assumptions and prove them under testable conditions. The effect is a measurable reduction in incident frequency and severity.

The table below maps common audit domains to practical checks and expected outputs, enabling engineering teams to operationalize audit findings.

| Audit Domain | Concrete Checks | Expected Artifact |

| Data Integrity | Schema validation, missing-value ratios, lineage checks | Versioned dataset manifests, drift reports |

| Model Validation | Cross-validation, calibration, OOD testing | Evaluation reports, model cards |

| Security & Privacy | Threat model, access controls, PII masking | Security assessment, access audit logs |

| Performance | p95/p99 latency tests, throughput under load | Load test reports, autoscaling policies |

| Monitoring | Drift alerts, accuracy monitors, business KPI hooks | Alert rules, runbooks, monitoring dashboards |

Closing the table, these artifacts must be stored in a retrievable audit repository to support both operational decisions and external review.

The next table ties monitoring signals to automated remediation actions and human intervention thresholds that audits should validate.

| Monitoring Signal | Automated Action | Human Action Threshold |

| Input distribution shift | Capture data snapshot, raise alert, queue for retraining | Data scientist review within defined SLA |

| Accuracy degradation | Temporarily route to fallback model, notify engineering | Incident review, rollback decision |

| Spike in latency | Autoscale inference cluster, enable degraded mode UI | Ops investigation and performance tuning |

| Suspicious outputs | Quarantine sessions for manual review | Product/ethics review and patching |

| Security anomaly | Revoke keys, initiate forensic logging | Security incident response activation |

Closing this subsection, an audit validates that automated actions actually execute under test and that human escalation paths are exercised via drills.

Building Trustworthy AI Products at Scale

AI systems are valuable only if they operate reliably, safely, and within legal and ethical boundaries under live conditions. A structured quality audit reduces deployment risk by translating abstract hazards into verifiable tests, documented artifacts, and operational controls. Organizations that institutionalize audits reduce silent failure modes and accelerate safe innovation.

Adopt an audit as a continuous discipline: version artifacts, automate validation, and connect monitoring to retraining and incident response. Leverage community practices, model cards and datasheets, to make evaluation transparent, and incorporate research-grade drift detection and monitoring techniques to detect degradation early.

If your team needs a formal AI audit checklist, implementation assistance for data-quality pipelines, or a production-grade monitoring stack, Stellar Soft can design and execute a tailored audit program. Contact Stellar Soft to operationalize AI quality controls and reduce deployment risk before your next release.

FAQs

Why do AI products fail?

AI products fail primarily because assumptions made during development do not hold in production. Data drift, unvalidated models, poor monitoring, and weak operational ownership cause systems to degrade silently until failures become visible to users or regulators.

What causes AI-generated product issues?

Issues arise from low-quality or biased data, overfitted models, and insufficient testing across real-world scenarios. Additional causes include security gaps, unclear usage boundaries, and lack of feedback loops that prevent timely correction.

How does quality audit prevent AI failures?

A quality audit enforces systematic validation of data, models, infrastructure, and governance before launch. It identifies failure modes early, documents limitations, and establishes monitoring and remediation procedures that reduce incident frequency and severity.

What risks come from unchecked AI systems?

Unchecked AI systems introduce operational instability, legal and compliance exposure, and reputational damage. They can amplify bias, leak sensitive data, make unreliable decisions at scale, and become costly to correct once embedded in critical business processes.

An AI audit checklist functions as a pre-launch control mechanism that translates abstract “readiness” into verifiable criteria. In 2026, audits are no longer optional hygiene; they are a structural requirement for production AI.

This article defines a concrete AI audit checklist grounded in engineering practice, data governance, and operational risk management. Each section explains what must be audited, why it matters, and how gaps manifest after launch. The perspective is technical and implementation-oriented, aligned with production-grade AI delivery.

Why AI Audits Are Critical Before Production

AI audits are critical because AI systems behave probabilistically and evolve over time. Unlike deterministic software, model outputs depend on data distributions, inference environments, and user interaction patterns that shift post-deployment. Audits impose discipline on systems that would otherwise degrade silently.

Pre-launch audits reduce downstream costs by identifying structural risks early. Research on production ML failures shows that most incidents originate from preventable design or governance gaps rather than model novelty. An audit enforces accountability across data, models, infrastructure, and monitoring.

Audits also serve as organizational alignment tools. They force agreement on acceptance thresholds, ownership, and escalation paths before real users are exposed. Without this alignment, teams respond reactively to incidents instead of preventing them.

- Data Quality & Integrity Review

Data quality is the foundation of any AI system, and its audit must precede model evaluation. This review verifies that training, validation, and inference data are accurate, representative, traceable, and governed. If data integrity is weak, downstream model metrics are misleading by definition.

Key audit checks include schema validation, missing-value analysis, label consistency, and feature distribution stability. Data lineage must be documented so every feature can be traced to its source system and transformation logic. Integrity reviews also assess whether consent, retention, and usage constraints are respected.

A data audit should explicitly evaluate bias risk at the dataset level. Sampling imbalance, proxy features, and historical bias propagate directly into model behavior. Empirical studies demonstrate that dataset bias often dominates algorithmic bias in deployed systems.

- Model Accuracy & Bias Testing

Model audits move beyond single accuracy scores. They evaluate whether the model performs consistently across scenarios, cohorts, and time. A model that performs well on average but fails on edge cases or protected groups is not production-ready.

Audit procedures should include cross-validation, out-of-distribution testing, calibration analysis, and subgroup performance comparison. Bias testing must be quantitative, using metrics such as disparate impact, equalized odds, or error parity where applicable. These results should be documented, not verbally summarized.

The following table outlines core model audit dimensions and the corresponding validation focus areas.

| Audit Dimension | What Is Evaluated | Typical Failure Signal |

| Predictive accuracy | Task-specific performance metrics | Overfitting to validation set |

| Calibration | Confidence vs actual correctness | Overconfident wrong predictions |

| Robustness | Sensitivity to noise or perturbations | Large output variance |

| Bias & fairness | Performance across cohorts | Disparate error rates |

| Explainability | Feature attribution stability | Non-intuitive drivers |

Closing this section, model audits must be reproducible. Every metric should be regenerable from versioned data and code. If results cannot be reproduced, they cannot be trusted.

- Security & Compliance Validation

Security and compliance audits ensure that the AI system does not introduce unacceptable legal or operational exposure. This validation covers data handling, model access, inference endpoints, and integration boundaries. In regulated contexts, it also maps controls to applicable legal frameworks.

Security checks include authentication, authorization, encryption, secrets management, and input validation. AI-specific risks such as model inversion, prompt injection, or data exfiltration through outputs must be assessed. Threat modeling should explicitly include the model as an attack surface.

Compliance validation confirms adherence to privacy, record-keeping, and auditability requirements. This includes documenting data sources, retention policies, and user consent flows. Governance research emphasizes that lack of documentation is a primary blocker in post-incident investigations.

The following bullet list summarizes minimum security and compliance audit items that must be satisfied before launch:

- Verified access controls, encryption, and secure credential handling across the AI stack.

- Documented data governance, consent management, and compliance mappings.

Security and compliance are not post-launch patches. If unresolved at audit time, they invalidate launch readiness.

4. Performance & Scalability Testing

Performance audits verify that the AI system meets reliability and responsiveness requirements under realistic conditions. This includes inference latency, throughput, resource utilization, and failure behavior. Performance that only works in staging environments is not acceptable.

Testing must simulate peak loads, cold starts, and degraded infrastructure scenarios. Metrics should focus on tail latency (p95, p99) rather than averages. Scalability audits also assess whether autoscaling policies respond correctly to demand spikes.

The table below defines common performance audit metrics and their operational relevance.

| Metric | Why It Matters | Audit Expectation |

| p95 / p99 latency | User experience and SLA adherence | Within defined thresholds |

| Throughput | Ability to handle peak demand | Sustained at projected load |

| Resource utilization | Cost and stability | Predictable under stress |

| Failure recovery time | System resilience | Automated and bounded |

| Degradation behavior | Safety under overload | Graceful fallback |

Closing this section, performance audits should be repeated after any material model or infrastructure change. Performance is not a one-time certification; it is an ongoing property.

5. Monitoring, Logging & Post-Launch Controls

An AI audit is incomplete without validating post-launch controls. Monitoring and logging determine whether issues are detected early or discovered through user complaints. A system without observability is operationally blind.

Audit checks include input data drift detection, output distribution monitoring, accuracy tracking with delayed labels, and system health metrics. Logging must be structured, queryable, and compliant with privacy constraints. Alert thresholds and escalation paths must be defined before launch.

The bullet list below outlines essential post-launch control mechanisms that should be audited:

- Automated monitoring for data drift, performance degradation, and abnormal outputs.

- Defined rollback, retraining, and human-in-the-loop intervention procedures.

Closing this section, research on concept drift shows that unattended models degrade predictably over time. Monitoring is therefore a preventive control, not a diagnostic luxury.

Launching AI Products With Confidence

Launching AI products with confidence requires more than model accuracy claims. It requires evidence that the system has been audited across data, models, infrastructure, and governance. An AI audit checklist converts readiness from opinion into proof.

Organizations that institutionalize pre-launch AI audits experience fewer incidents, faster recovery, and higher stakeholder trust. Audits also scale; once formalized, they accelerate future launches instead of slowing them down. In 2026, mature AI teams treat audits as part of delivery, not as a barrier.

Stellar Soft helps organizations design and execute rigorous AI audits, from data integrity reviews to production monitoring frameworks. If you are preparing an AI system for launch and need a structured pre-launch AI audit, contact Stellar Soft to reduce deployment risk and move to production with confidence.

FAQs

What is an AI audit?

An AI audit is a structured evaluation of data, models, infrastructure, and governance controls to determine whether an AI system is safe, compliant, and production-ready. It translates technical risk into explicit acceptance criteria.

Why is an AI audit important before launch?

AI audits identify failure modes that are expensive or irreversible after deployment. They reduce operational, legal, and ethical risk by enforcing discipline before real users are affected.

What should be included in an AI audit checklist?

A complete checklist covers data quality, model validation, bias testing, security and compliance, performance, scalability, and monitoring. Each item must be testable and documented.

How to reduce AI deployment risks?

Reduce risk by treating audits as gate reviews, automating validation where possible, and assigning clear ownership for remediation. Governance frameworks show that accountability reduces incident severity.

Failures after launch are costly: they impact customers, revenue, and engineering capacity. This checklist identifies concrete, testable signals that indicate an AI application is not ready for public release.

Each sign below is grounded in engineering, data, and operational practice rather than marketing rhetoric. Treat these signs as gate criteria for a launch readiness review. For each sign, I explain the technical rationale and pragmatic remediation steps.

Why AI Product Readiness Matters

AI product readiness matters because models interact with real people and live data distributions that differ from training sets. The difference between research prototypes and production systems includes observability, robustness, and governance. A successful launch requires operational controls that keep models performing safely and predictably.

Organizations that skip readiness disciplines frequently encounter silent degradation: models that appear functional but slowly lose value as data shifts or edge cases accumulate. Building production-grade ML systems is an engineering problem that also requires continuous monitoring, retraining pipelines, and formal acceptance criteria. Consider production readiness as the union of model quality, data quality, performance, security, and observability; each dimension is necessary.

Evidence from production-system studies shows that systems designed with end-to-end validation and monitoring substantially reduce incident frequency and mean time to remediation. Production-ready ML frameworks document operational patterns for validation, rollback, and human-in-the-loop controls.

Sign #1: Unvalidated AI Models

An unvalidated model is one that has not undergone rigorous out-of-sample, adversarial, and scenario testing. Validation must go beyond holdout accuracy to include calibration, robustness to distributional shifts, and performance on targeted edge cases. If your model’s acceptance tests are limited to a single static test set, it is not validated.

Validation should include unit tests for preprocessing, integration tests for feature pipelines, and stress tests for unusual inputs. Adversarial tests and out-of-distribution checks must be automated and repeatable. Implementing systematic explainability checks also surfaces fragile model behavior early; monitoring and explainability practices help identify where models will behave unexpectedly in production.

Sign #2: Poor Data Quality or Bias Issues

If training and validation datasets contain label noise, sampling bias, or feature drift, the model will inherit these defects. Poor data quality manifests as inconsistent predictions, unexplained error spikes, and differential performance across cohorts. Organizations that lack data-quality scoring and bias detection cannot reliably predict production behavior.

Run data-quality pipelines that compute completeness, uniqueness, distributional parity, and label stability metrics before any release. Apply fairness and bias audits that measure disparate impact and other standardized fairness metrics across relevant subgroups. These audits should be part of the pre-launch gate; unresolved bias findings are a clear red flag.

Sign #3: Performance & Scalability Gaps

If the model cannot meet production latency and throughput targets under realistic load, launch should be delayed. Performance problems show up as request timeouts, queue buildups, or degraded user experience. Evaluate the entire inference stack, model architecture, serving runtime, batching, and autoscaling policies, under load that reflects anticipated peak demand.

Measure p95 and p99 latencies, not just average response time, and validate that tail latency meets SLA. Also validate resource consumption under sustained load and cold-start scenarios. The table below provides common performance indicators and conservative thresholds you should validate before launch.

| Performance Indicator | Meaning | Conservative Pre-Launch Threshold |

| p95 latency | 95th percentile request duration | ≤ target SLA (e.g., 200–500 ms) |

| p99 latency | Tail latency under load | ≤ 2× p95 |

| Throughput (RPS) | Requests per second sustained | ≥ projected peak * safety factor |

| Cold start time | Latency when service scales from zero | Measured and acceptable to UX |

| Memory / GPU utilization | Resource use per model instance | Stable under stress tests |

Closing this section, performance and scalability are not solely about faster hardware. They require profiling, model optimization (quantization, distillation), and careful orchestration of serving infrastructure. Research on inference-efficient model design and scheduling demonstrates that architecture choices materially change latency and cost profiles.

Sign #4: Missing Security & Compliance Checks

If you have not performed threat modeling, data access reviews, and compliance mapping, your product is not safe to launch. Security and regulatory requirements vary by domain, but every AI product must guard against data leakage, privilege escalation, and malicious inputs. Absence of encryption in transit and at rest, lack of role-based access control, or unvetted third-party data feeds are immediate red flags.

Security checks should include input validation, adversarial-input defenses, secure secrets management, and logging for forensic analysis. Compliance checks need data lineage, consent records, and privacy impact assessments where applicable. The bullet list below summarizes minimum security and compliance controls that must be in place before launch.

- Conduct a formal threat model and mitigation plan for model and data flows.

- Validate encryption, access controls, audit logging, and data retention policies.

These controls are non-negotiable for regulated industries and recommended universally. Closing this subsection, failing to implement security and compliance controls is a primary cause of regulatory action and critical incidents.

Sign #5: Lack of Monitoring & Feedback Loops

If you cannot detect drift, performance degradation, or user complaints automatically, the app is not ready. Monitoring must cover data inputs, model outputs, latency, and business metrics; it must also include alerting thresholds and escalation procedures. Without automated detection of concept drift and an actionable retraining pipeline, models silently lose effectiveness.

Define the signals that trigger retraining or human review, and implement continuous evaluation against these signals. The table below maps common monitoring signals to automated actions and human interventions, which should be codified in runbooks before any launch.

| Monitoring Signal | Automated Action | Human Intervention |

| Input distribution shift | Raise alert, capture batch for retraining | Data scientist review of features |

| Label drift / accuracy drop | Lower confidence thresholds, degrade model | Root-cause analysis; model rollback |

| Latency spike | Autoscale inference cluster, scale down async jobs | Ops investigation and hotfix |

| Unusual user feedback | Flag sessions for human review | Product/UX researcher follow-up |

| Rising error rates | Circuit breaker to safe fallback model | Engineering incident response |

Monitoring is not passive telemetry. It must be connected to automated remediation paths and retraining pipelines that reduce time to recovery. Recent work on concept-drift detection provides practical algorithms for industrial monitoring.

How to Prepare AI Products for a Successful Launch

Prepare your AI product by converting the signs above into gate criteria and automated test suites. Build retraining pipelines, implement end-to-end observability, validate fairness, and harden security controls. Launch readiness is achieved when engineering, data science, product, and legal teams jointly certify the product against agreed acceptance criteria.

Adopt a staged launch strategy: internal dogfooding, limited beta, monitored ramp, and full rollout. Each stage should exercise different parts of the system under realistic conditions and provide labeled feedback for iterative improvement. Finally, document runbooks and rollback procedures so incidents are resolvable within defined SLAs.

If your team needs help operationalizing these readiness practices, Stellar Soft can design validation frameworks, monitoring stacks, and production-grade inference architectures tailored to your domain and risk profile. Contact Stellar Soft to perform a launch readiness audit and implement the engineering controls that prevent costly post-release failures.

FAQs

How do you know if an AI app is ready for launch?

You know readiness by passing a checklist that covers model validation, data quality, performance and scalability, security and compliance, and monitoring with feedback loops. Each dimension must have objective acceptance criteria and automated tests.

What are common AI product launch mistakes?

Common mistakes include relying only on holdout test accuracy, ignoring skew between training and production data, under-engineering inference infrastructure, and lacking incident playbooks. These mistakes produce silent failures and customer harm.

Why do AI apps fail after launch?

AI apps fail due to distributional shift, unhandled edge cases, insufficient observability, and missing governance. Failures are often systemic rather than single-point bugs; they reflect gaps in operationalizing ML.

What should be tested before launching an AI app?

Test suites should include unit tests for data pipelines, integration tests for model and service interactions, end-to-end acceptance tests, adversarial/edge-case scenarios, load tests for inference, security penetration tests, and A/B experimentation plans. Each test must have pass/fail criteria.

Platforms are no longer evaluated on reach or impressions alone but on their contribution to pipeline velocity and customer lifetime value. This shift reflects tighter alignment between social channels and enterprise revenue systems.

The increasing complexity of customer journeys requires more precise attribution across touchpoints. Social media interactions often occur upstream of conversion, making naive last-click models insufficient. As a result, advanced attribution frameworks now dominate ROI analysis.

In 2026, social media ROI trends are shaped by automation, AI-driven analytics, and cross-channel data unification. Brands that fail to adapt to these trends struggle to justify social media investment. The following sections analyze how ROI is defined, measured, and optimized under modern conditions.

What Social Media ROI Means in Modern Marketing

Social media ROI refers to the quantified business value generated relative to social media investment. This value includes revenue, lead quality, retention uplift, and cost efficiency. It excludes vanity metrics unless they correlate statistically with downstream outcomes.

In modern marketing, social media return on investment is calculated across long attribution windows. Social interactions influence awareness, consideration, and trust rather than immediate purchase alone. This necessitates probabilistic modeling rather than deterministic attribution.

Social media ROI now depends on data integration across CRM, analytics platforms, and ad systems. Without unified identifiers and event-level data, ROI measurement becomes fragmented. Research on causal inference in marketing highlights the necessity of counterfactual modeling to isolate true impact.

Measurement maturity also requires separating paid social ROI from organic social performance. Each channel exhibits distinct cost structures, scaling behavior, and marginal returns. Treating them as a single channel distorts performance interpretation.

Top Social Media ROI Trends in 2026

The dominant social media ROI trends in 2026 reflect structural changes in analytics and automation. These trends redefine how marketers allocate budget and evaluate performance. They also increase the technical barrier to accurate ROI measurement.

AI-Driven Attribution Models

AI-driven attribution models replace rigid rule-based frameworks with probabilistic inference. These models estimate the marginal contribution of each interaction across channels. They account for sequence order, time decay, and cross-platform influence.

Modern attribution models rely on deep learning architectures such as recurrent neural networks and transformers. These models capture temporal dependencies in customer journeys. Academic work on sequence-based attribution demonstrates superior accuracy over linear models.

AI attribution improves marketing ROI tracking by reducing bias toward bottom-funnel channels. Social media interactions receive proportional credit even when they occur early. This rebalances budget allocation decisions.

Advanced Social Media Analytics & Automation

Advanced social media analytics in 2026 extend beyond dashboards into autonomous decision systems. Machine learning models now optimize bid strategies, creative rotation, and posting schedules. These systems operate continuously rather than in campaign cycles.

Automation improves ROI by reducing response latency. Underperforming creatives are deprecated automatically, while high-performing variants receive increased exposure. Research on reinforcement learning for marketing optimization supports this adaptive approach.

Analytics pipelines increasingly integrate sentiment analysis and engagement quality scoring. These signals predict downstream conversion likelihood more effectively than raw engagement counts. As a result, brands prioritize signal quality over volume.

ROI Comparison: Paid vs Organic Social

Paid social ROI and organic social performance diverge significantly in 2026. Paid channels offer predictable scaling but exhibit diminishing marginal returns. Organic channels scale more slowly but compound over time.

The table below compares paid and organic social channels across ROI dimensions.

| Dimension | Paid Social ROI | Organic Social Performance |

| Cost structure | Variable, bid-based | Fixed, labor-driven |

| Scaling behavior | Immediate but saturating | Gradual and compounding |

| Attribution clarity | High (platform-level) | Moderate (multi-touch) |

| Creative lifespan | Short | Long |

| Marginal ROI trend | Decreasing with spend | Increasing with consistency |

This comparison highlights why mature strategies combine both channels. Overreliance on either model introduces risk and volatility. Balanced allocation improves long-term social media return on investment.

How Brands Can Improve Social Media ROI

Improving social media ROI requires structural changes rather than tactical experimentation. Brands must align data architecture, measurement frameworks, and execution systems. Optimization without measurement fidelity produces false positives.

The first step is implementing unified event tracking across platforms. This enables consistent measurement of social media performance metrics. Event schemas should align with revenue events rather than engagement abstractions.

The second step involves adopting attribution models aligned with business cycles. Short purchase cycles tolerate simpler models, while long cycles require sequence-aware attribution. Choosing the wrong model leads to misallocated spending.

The bullet list below outlines high-impact actions brands take to improve ROI.

- Implement multi-touch, AI-based attribution models aligned with real customer journeys

- Integrate social analytics with CRM and revenue systems for closed-loop measurement

These actions require coordination between marketing, data, and engineering teams. Tool adoption alone is insufficient without governance and shared definitions.

Optimization also depends on creative intelligence. Content performance should be evaluated using uplift modeling rather than engagement averages. This isolates causality rather than correlation.

The second bullet list identifies execution-level improvements.

- Automate creative testing and budget reallocation using performance thresholds

- Segment ROI reporting by audience cohort and lifecycle stage

Closing this section, brands that treat social media as a performance channel rather than a branding silo achieve superior ROI. Measurement discipline is the defining factor.

Turning Social Media Performance Into Measurable Growth

Social media ROI in 2026 is a function of measurement rigor, not platform novelty. Brands that invest in attribution accuracy and analytics integration outperform those chasing surface metrics. Social media return on investment now reflects system design choices as much as creative execution.

The convergence of AI, automation, and unified data pipelines transforms social media into a measurable growth engine. This evolution demands technical maturity and cross-functional alignment. Organizations that adapt early secure compounding advantages.

If your organization is seeking to measure social media ROI, implement advanced attribution models, or improve marketing ROI tracking across paid and organic channels, Stellar Soft provides the technical expertise to design data-driven, scalable solutions.

Contact Stellar Soft to transform social media performance into measurable, defensible business growth.

FAQs

What is social media ROI?

Social media ROI is the measurable business value generated from social media activities relative to their cost. It includes revenue impact, lead contribution, and efficiency gains rather than engagement alone.

How do you measure social media ROI in 2026?

In 2026, brands measure social media ROI using AI-driven multi-touch attribution models. These models integrate data from ad platforms, analytics tools, and CRM systems to estimate marginal impact.

What metrics define social media success?

Social media success is defined by revenue-aligned metrics such as conversion lift, cost per acquisition, and customer lifetime value. Engagement metrics are used only when predictive of these outcomes.

Which social platforms deliver the best ROI?

ROI varies by industry, audience, and funnel position rather than platform alone. Platforms with strong intent signals and advanced targeting typically deliver higher paid social ROI.

How is AI changing social media ROI tracking?

AI enables probabilistic attribution, automated optimization, and predictive performance modeling. These capabilities reduce bias and improve allocation efficiency across channels.

It replaces graphical navigation with conversational interfaces powered by natural language processing and speech recognition. This transition alters both frontend interaction models and backend orchestration logic.

Voice Commerce: How It Actually Works in Real Life

Instead of describing complex architecture, let’s look at how global leaders have implemented voice shopping to reduce friction.

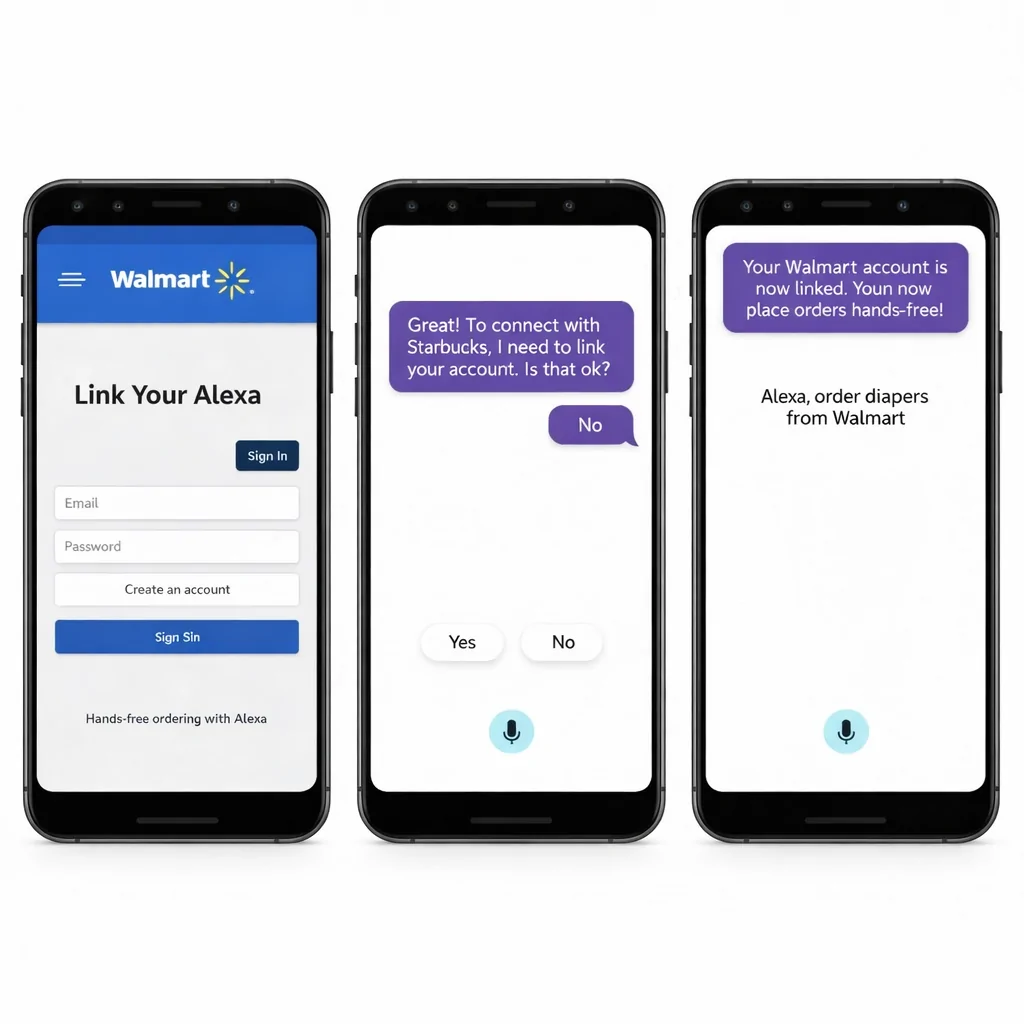

Example 1: Groceries with Walmart (Google Assistant)

Walmart has partnered with Google to allow customers to manage their shopping carts entirely by voice. To activate it, a user simply says, “Hey Google, talk to Walmart.”

- Learning Preferences: The system learns the brands you love. Instead of saying “one gallon of 1 percent Great Value organic milk,” you can just say “milk,” and Walmart adds the specific item you regularly buy.

- Cart Management: Users can issue commands like “Add 4 more bananas,” “What’s in my cart?”, or “I don’t want bananas anymore”.

- Utility: With over 100,000 grocery items available, the focus is on speed and daily essentials.

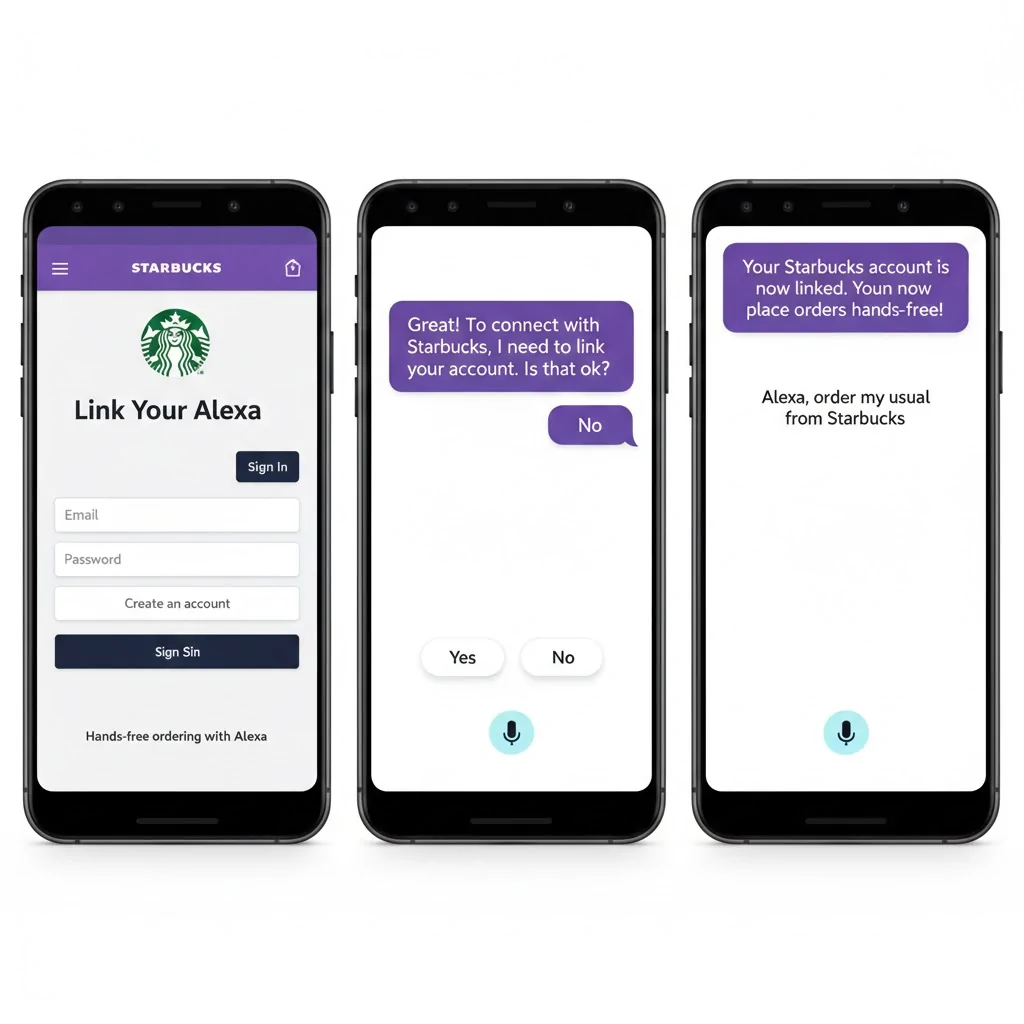

Example 2: Quick Service with Starbucks (Alexa)

Starbucks utilizes Amazon Alexa to simplify the morning coffee routine through voice-enabled reordering.

- The Flow: Customers can place their “usual” order through any Alexa-enabled device.

- The Pickup: After the voice command, the order is sent to the nearest store, and the customer simply picks it up without waiting in line or using a screen.

- Contextual Ease: This is the perfect example of voice commerce in a hands-free environment (like driving or getting ready for work).

Where Voice Commerce Works Best

Voice shopping is not ideal for everything. It works best for:

- Reorders: Quickly replenishing items you’ve bought before.

- Low-decision products: Groceries, supplements, and household essentials.

- Subscriptions: Managing recurring orders like coffee or vitamins.

It is less effective for fashion browsing or luxury items that require high-quality visuals and complex product comparisons. Voice is about speed and utility, not exploration.

What a Voice-Enabled Website Actually Needs

For voice commerce to function properly, the website behind it must support:

Clean Product Data

Products must have:

- Structured attributes

- Clear pricing rules

- Machine-readable variations

Messy catalogs break voice interactions instantly.

API-First Architecture

Voice assistants do not “browse” your website.

They are called APIs.

Your backend must allow:

- Search by intent

- Real-time price checks

- Inventory validation

- Secure payment confirmation

Without this, voice commerce becomes unreliable.

Conversational UX Design

Voice UX is not web UX.

Instead of:

- Menus

- Filters

- Grids

You design:

- Clarification prompts

- Confirmation logic

- Short summaries

- Error recovery responses

Example of bad UX:

“Sorry, I didn’t understand.”

Example of good UX:

“Did you mean the 500g or 1kg option?”

A Simple Website Flow vs Voice Flow

| Website Shopping | Voice Shopping |

| Browse category | State intent |

| Apply filters | System resolves constraints |

| Compare products visually | System ranks and summarizes |

| Add to cart | Confirm verbally |

| Checkout form | Payment confirmation |

Voice compresses the entire process into dialogue.

Key Benefits of Voice Commerce for eCommerce Brands

Voice commerce offers operational and strategic benefits that differ from traditional UI-based commerce. The primary advantage is reduced interaction friction, as users bypass visual navigation entirely. This compression of the decision path increases task completion speed for repeat and utility-driven purchases.

Another benefit is deeper integration with eCommerce personalization AI. Voice assistants can leverage historical orders, preferences, and contextual signals to propose relevant options proactively. Studies on personalized dialogue systems show increased user satisfaction when recommendations are embedded conversationally.

Voice commerce also expands reach into new usage contexts. Shopping actions can occur while users are driving, cooking, or multitasking, scenarios unsuitable for screen-based interfaces. This context expansion creates incremental demand rather than merely shifting channels.

From a data perspective, voice interactions generate rich conversational signals. These signals provide insight into natural language demand articulation rather than keyword-driven intent. Brands that analyze this data gain strategic advantage in product positioning and taxonomy design.

The table below summarizes key benefits and their technical implications.

| Benefit | Technical Implication | Business Impact |

| Reduced friction | Conversational state management, intent ranking | Faster repeat purchases |

| Personalization | User embeddings, preference models | Higher conversion rates |

| Contextual reach | Multimodal device integration | New demand channels |

| Natural language data | NLP analytics pipelines | Improved merchandising insights |

A closing observation is that benefits materialize only when voice systems are tightly integrated with core eCommerce infrastructure. Isolated voice skills without backend intelligence produce limited commercial value.

Voice Commerce Use Cases in Modern eCommerce

Voice commerce use cases vary by product category, purchase frequency, and user intent complexity. The most effective applications prioritize low-ambiguity tasks and high repetition. These constraints reduce error rates in conversational flows.

Use cases increasingly align with conversational commerce models rather than traditional browsing metaphors. Instead of navigating categories, users express goals, constraints, and preferences verbally. This shifts UX design toward dialogue optimization rather than visual hierarchy.

Voice Search and Product Discovery

Voice search eCommerce focuses on translating open-ended queries into structured product retrieval. Queries such as “find running shoes under one hundred dollars” require semantic parsing and constraint resolution. Ranking models must balance relevance, availability, and personalization simultaneously.

Unlike typed search, voice queries are longer and more natural. This aligns with advances in semantic search and dense vector retrieval models. These models outperform keyword matching in conversational contexts.

A core challenge is result summarization. Voice interfaces cannot present large result sets, so systems must select and justify limited options. This necessitates explainable recommendation logic and confidence scoring.

Voice-Enabled Checkout and Reordering

Voice-enabled checkout is most effective for replenishment and habitual purchases. Users issue commands such as “reorder my usual coffee,” relying on stored preferences and payment credentials. This requires deterministic order mapping and strong confirmation logic.

Security is enforced through voice biometrics, device authentication, or secondary confirmation steps. Research into speaker verification systems demonstrates improving accuracy in consumer environments. These mechanisms are critical for transaction trust.

Voice reordering reduces cart abandonment entirely by eliminating carts. The transaction becomes a single conversational exchange. This model redefines conversion optimization around intent clarity rather than UI persuasion.

UX & Technical Challenges of Voice Commerce